Author Archives: sam

Turning a camera into an instrument (of sorts).

I worked with Aaron Arntz to produce this little experiment. The idea is that the camera splits the screen into blocks, then captures color values for each block, and then plays music whenever the colors shift… It’s a bit of a mess right now but it works!

I dealt with image processing and display, and Aaron used the minim library to produce the sounds.

The code’s over on github.

And here’s a video of the project in action:

[vimeo https://vimeo.com/75279998 w=640]

As you can see something went terribly wrong/right with my recording setup…

“I can always make more”

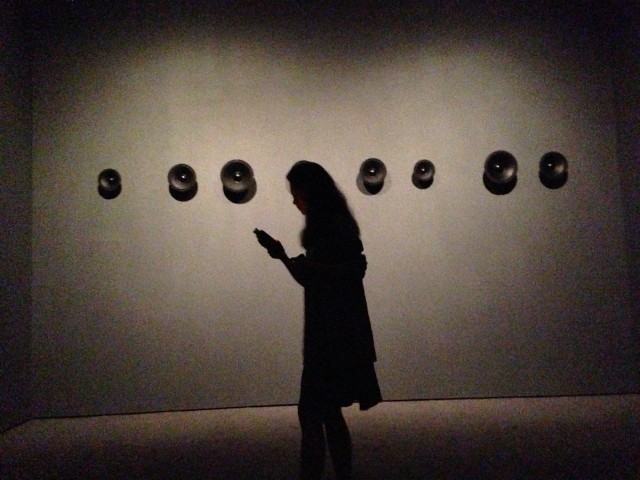

Here are the results of my collaboration with Billy Dang and Yiyang Liang, tentatively called “I can always make more”. The audio clips used are from field recordings we made at MOMA (including recordings of audio installation pieces), interviews with strangers and friends, and sounds of the city.

[soundcloud url=”http://api.soundcloud.com/tracks/111100701″ params=”” width=” 100%” height=”166″ iframe=”true” /]

Here are some pics of the recording process:

Prisms

For my second computational media project I worked with Brian Clifton to make this prism extruding thingy. We were inspired by the code we found here.

Click here to see the sketch on openprocessing.org. You can mouse over to extrude the triangles, click them to change their color, or move the slider (or mouse wheel) to change their max height.

Here’s a vid:

[vimeo https://vimeo.com/74787712 w=640]

And here’s the code:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 |

int grid = 20; float b = 0; ArrayList<Prism> prisms; //slider variables boolean dragging = false; // Is the slider being dragged? boolean rollover = false; // Is the mouse over the slider? // Rectangle variables for slider float rx = 50; float ry = 500; float rw = 10; float rh = 100; // Start and end of slider float sliderStart = 70; float sliderEnd = 570; float offsetX = 0; // Offset for dragging slider void setup() { size(640, 640); prisms = new ArrayList<Prism>(); for (int y = 0; y < width; y += grid) { for (int x = 0; x < height; x += grid) { float r = random(1); color c = color(noise(y, x)*255, noise(y, x)*200, noise(y, x)*55, random(255)); if (r < 0.25) { prisms.add(new Prism(x, y, x, y+grid, x+grid, y+grid, c /*v*/)); // add a new instance of the class Prism to the arrayList prisms. } else if (r >= 0.25 && r < 0.5) { c = color(noise(r)*105, noise(r)*200, noise(r)*155, random(255)); prisms.add(new Prism(x, y, x+grid, y, x, y+grid, c /*v*/)); } else if (r >= 0.5 && r < 0.75) { prisms.add(new Prism(x, y, x+grid, y, x+grid, y+grid, c /*v*/)); } else { prisms.add(new Prism(x+grid, y, x+grid, y+grid, x, y+grid, c /*v*/)); } } } } void draw() { background(255); for (int i = 0; i < prisms.size(); i++) { prisms.get(i).display(); } b = (int) map(rx, sliderStart, sliderEnd - rw, 2, 6); noStroke(); fill(color(255, 255, 255, 220)); rect(50, 480, 540, 140); float d = sliderStart; while (d < sliderEnd) { for (float h = -10; h > -100; h = h-2.71) { fill(color(255, 0, 0, 60)); noStroke(); rect(d, 600, 10, h); d = d+14.848; } } if (dragging) { rx = mouseX + offsetX; } // Keep rectangle within limits of slider rx = constrain(rx, sliderStart, sliderEnd - rw); stroke(0); if (dragging) { fill(color(255, 77, 90)); } else { fill(color(255, 128, 137)); } // Draw rectangle for slider noStroke(); rect(rx, ry, rw, rh); } void mousePressed() { // Did I click on slider? if (mouseX > rx && mouseX < rx + rw && mouseY > ry && mouseY < ry + rh) { dragging = true; // If so, keep track of relative location of click to corner of rectangle offsetX = rx - mouseX; } } void mouseReleased() { // Stop dragging dragging = false; for (int i = 0; i < prisms.size(); i++) { if (prisms.get(i).should_grow()) { prisms.remove(i); } } } void mouseWheel(MouseEvent event) { float e = event.getAmount(); rx -= e; } class Prism { int x1, x2, x3, y1, y2, y3, max_height, current_height, desired_height, x_offset, current_x_offset, desired_x_offset, mouseRange; color c; Prism(int tempx1, int tempy1, int tempx2, int tempy2, int tempx3, int tempy3, color tempcolor) { x1 = tempx1; y1 = tempy1; x2 = tempx2; y2 = tempy2; x3 = tempx3; y3 = tempy3; c = tempcolor; max_height = (int) random(100); current_height = 1; current_x_offset = 0; desired_x_offset = 0; mouseRange = (int) random(10, 50); x_offset = (int) random(30, 100); } boolean should_grow() { //needs to be fixed because some prisms aren't getting picked up if (mouseX > x1 - mouseRange && mouseX < x1 + grid + mouseRange && mouseY > y1 - mouseRange && mouseY < y1 + grid + mouseRange) { return true; } else { return false; } } void change_height() { if (current_height < desired_height) { current_height += b; } else if (current_height > desired_height) { current_height -= 1; } if (current_x_offset < desired_x_offset) { current_x_offset += b; } else if (current_x_offset > desired_x_offset) { current_x_offset -= 1; } } void change_color() { c = color(random(255), random(255), random(255), random(255)); } void display() { fill(c); if (should_grow()) { desired_height = max_height + int(b) *20; desired_x_offset = x_offset; } else { desired_height = 0; desired_x_offset = 0; } noStroke(); triangle(x1, y1, x2, y2, x3, y3); if (current_height > 0 || current_x_offset > 0) { stroke(255); quad(x1, y1, x2, y2, x2 - current_x_offset, y2 - current_height, x1 - current_x_offset, y1 - current_height); quad(x1, y1, x3, y3, x3 - current_x_offset, y3 - current_height, x1 - current_x_offset, y1 - current_height); quad(x2, y2, x3, y3, x3 - current_x_offset, y3 - current_height, x2 - current_x_offset, y2 - current_height); triangle(x1 - current_x_offset, y1 - current_height, x2 - current_x_offset, y2 - current_height, x3 - current_x_offset, y3 - current_height); } change_height(); } } |

What is Interactivity?

A few thoughts on Bret Victor’s “The Future of Interaction Design”…

Victor begins his invective against current trends in interaction design with a video produced by Microsoft that depicting various lonely souls swiping at paper-thin screens in a not-too-distant future. It makes for a good target and feels at home in Microsoft’s line of depressing videos about the current and could-be future state of technology. The characters in it seem so alienated and the overall mood so melancholy that I was actually surprised that the man at the subway station at 1:52 doesn’t just go ahead and step in front of the oncoming train. He really looks like he’s considering it:

[youtube http://www.youtube.com/watch?v=a6cNdhOKwi0&t=1m45s&w=640]

Victor laments the lack of vision in this version of the future, and notes that the paradigm of the tablet, which has only recently become a reality, was in fact initiated by Alan Kay in 1968. The vision has not advanced significantly since then, and the tools that we use do not come close to leveraging our expressive capacity (although I do think that the glass smart phone does, ironically, enhance human capability: in this case it is the human capability to swipe ineffectually at a world that you are alienated from).

Chris Crawford describes interactivity as a kind of infinite loop of “listen, think, speak” between two actors. Victor, I think, would want to collapse that loop, make it entirely invisible to the actors. Victor has described his work almost as a cure for blindness, saying that good interactivity allows the user to “see what you’re doing” and “try ideas as you think of them”. This type of interaction, which seeks to enhance understanding, generate unexpected ideas, and aid in creativity, requires tools that are able to leverage a full range of sensory input and output. Not merely of the eyes, but also the ears, the hands, and ultimately the entire body.