Category Archives: Uncategorized

Audiogrep: Automatic Audio “Supercuts”

Audiogrep is a python script that transcribes audio files and then creates audio “supercuts” based on search phrases. It uses CMU Pocketsphinx for speech-to-text, and pydub to splice audio segments together.

This is a sister project to my videogrep script, which does a similar thing but with video (and makes use of subtitle tracks rather than speech-to-text).

So far I’ve mostly been experimenting with audio books. Here, for example, are all the phrases in How Google Works by Eric Schmidt and Jonathan Rosenberg that contain the word “data”.

[soundcloud url=”https://api.soundcloud.com/tracks/192358628″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

And here are all the references to “private wealth” in Capital in the Twenty-first Century by Thomas Piketty:

[soundcloud url=”https://api.soundcloud.com/tracks/192358627″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

You can also extract just individual words, rather than phrases.

For example, here are all instances of “money” and “people” from the book The Automatic Millionaire: A Powerful One-Step Plan to Live and Finish Rich by David Bach:

[soundcloud url=”https://api.soundcloud.com/tracks/192352602″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

“Control”, “psychological”, “behavior” and “situations” from the nightmarishly titled Get Anyone to Do Anything: Never Feel Powerless Again — With Psychological Secrets to Control and Influence Every Situation by David J. Lieberman

[soundcloud url=”https://api.soundcloud.com/tracks/192352607″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

And here’s “relax”, and “large” from Breast Enlargement Hypnosis, a truly remarkable audio experience by Victoria Gallagher.

[soundcloud url=”https://api.soundcloud.com/tracks/192352619″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

Another experiment from the same amazing source:

[soundcloud url=”https://api.soundcloud.com/tracks/192353162″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

It’s also possible to use the script to create “frankenstein” sentences. Here’s Bill Clinton telling us to stop voting, sourced from his book My Life:

[soundcloud url=”https://api.soundcloud.com/tracks/192352609″ params=”color=ff5500&auto_play=false&hide_related=false&show_comments=true&show_user=true&show_reposts=false” width=”100%” height=”166″ iframe=”true” /]

And, by integrating moviepy, you can generate video slideshows like these or this:

[youtube https://www.youtube.com/watch?v=C6tpAGD00DM w=640]

The code is available on github. Next up I’ll be integrating some of this functionality into videogrep for more refined searches.

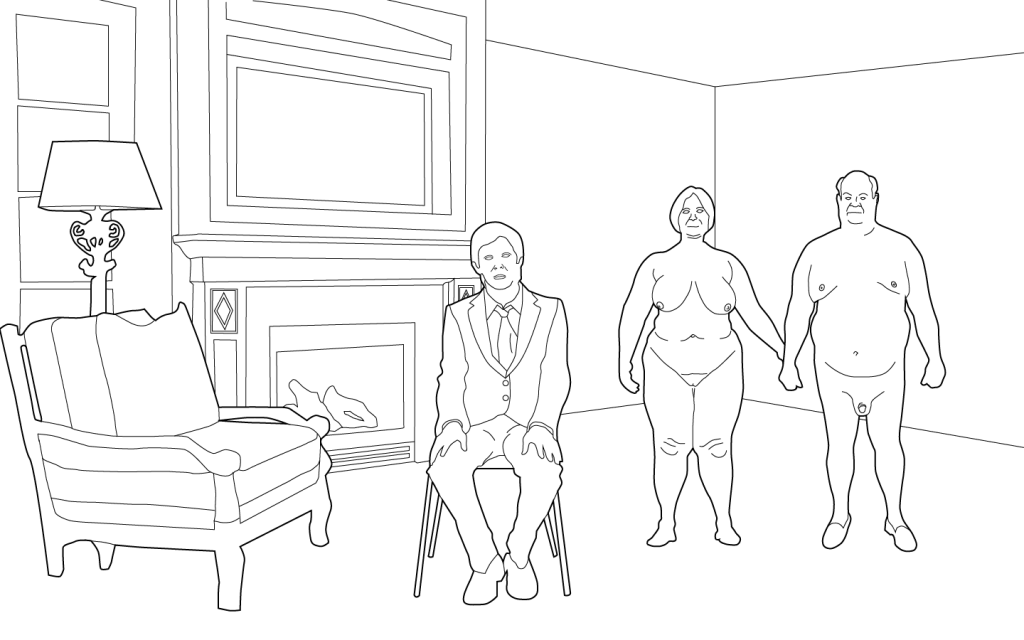

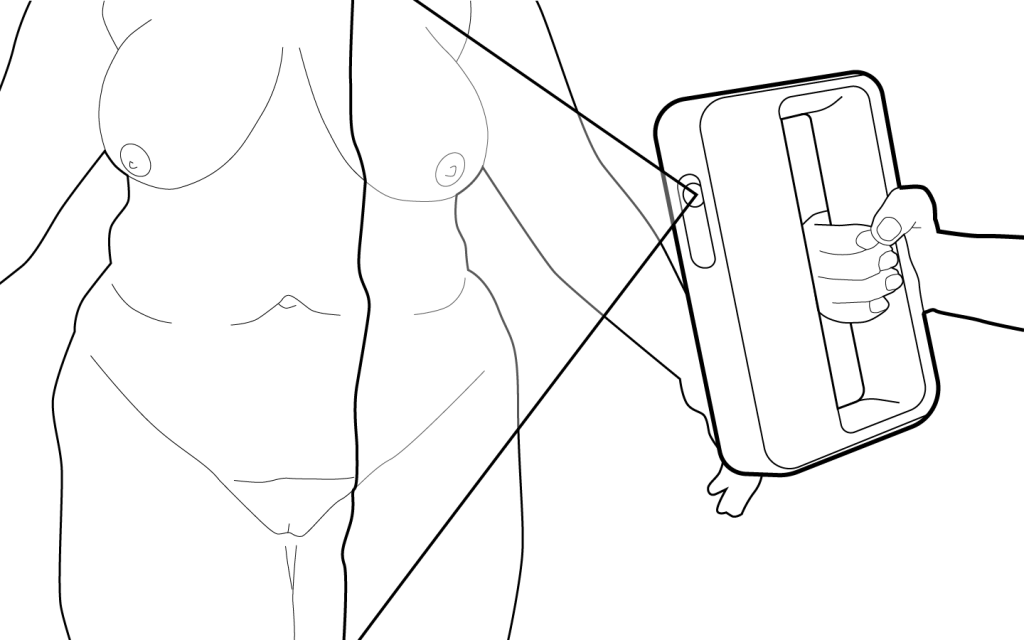

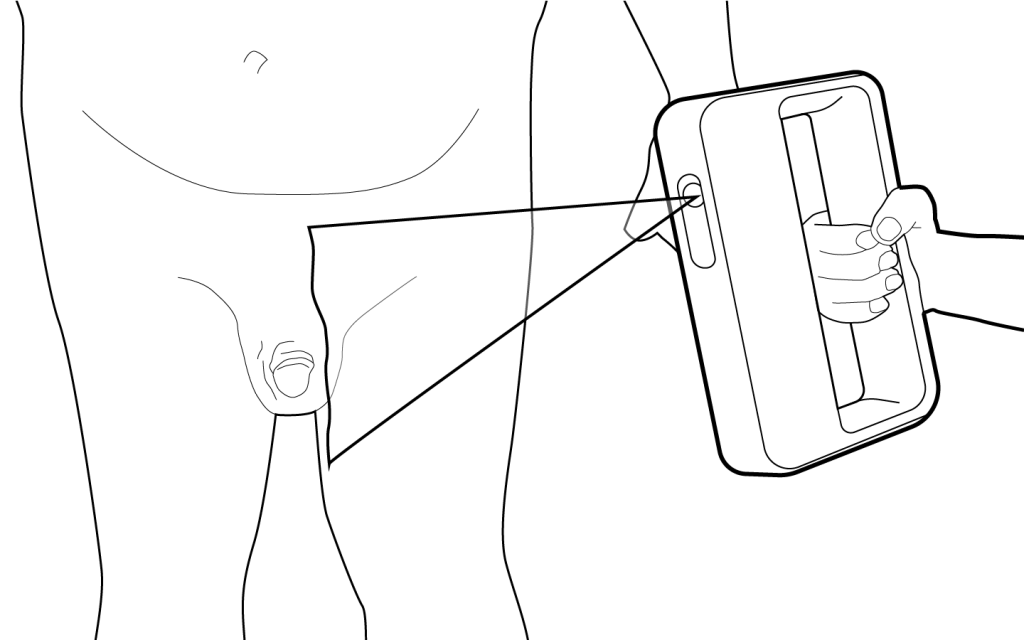

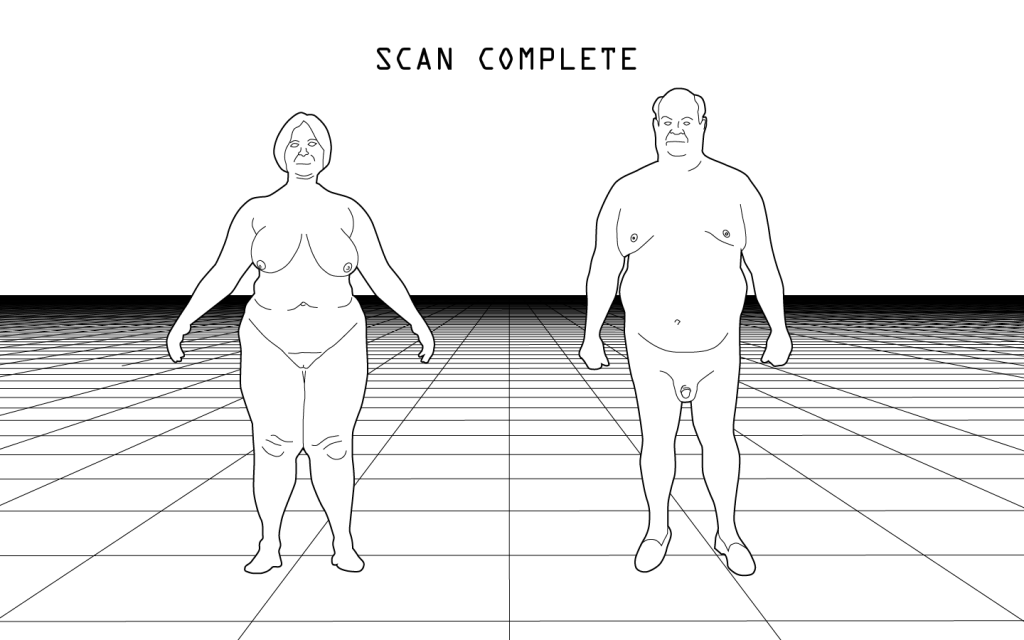

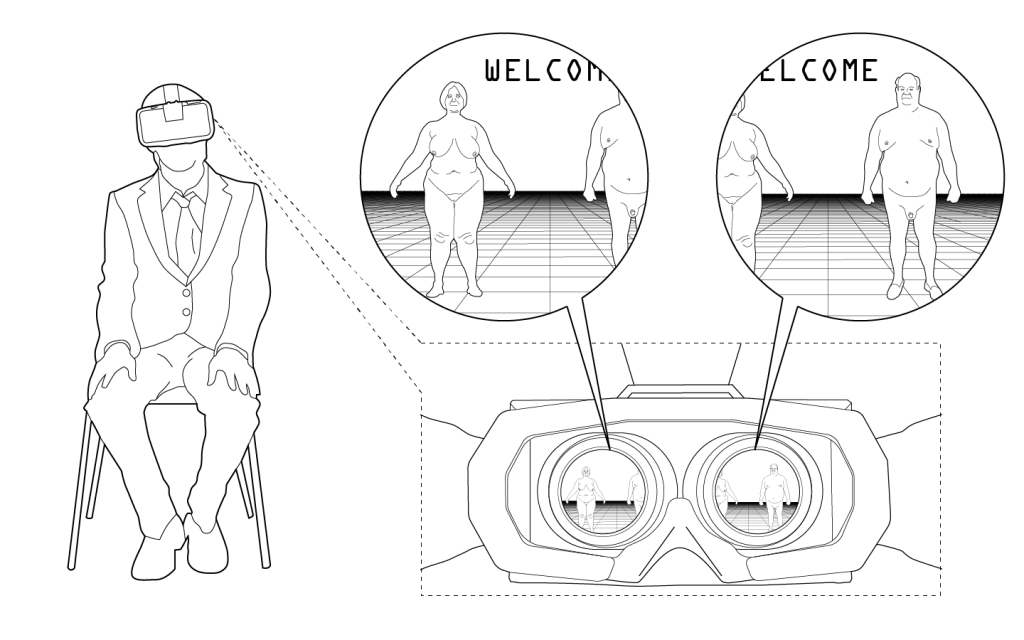

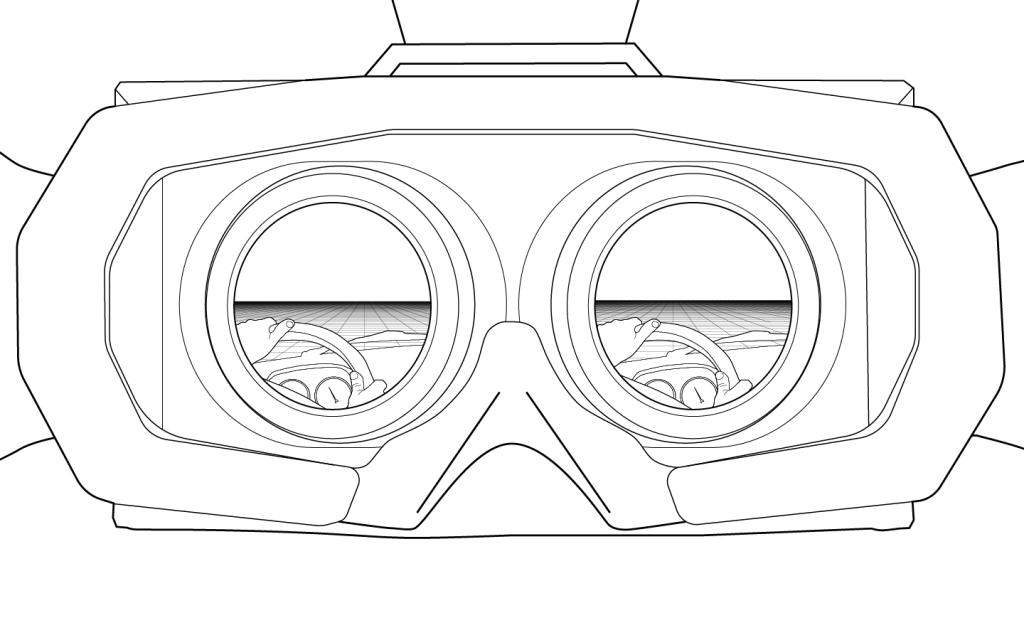

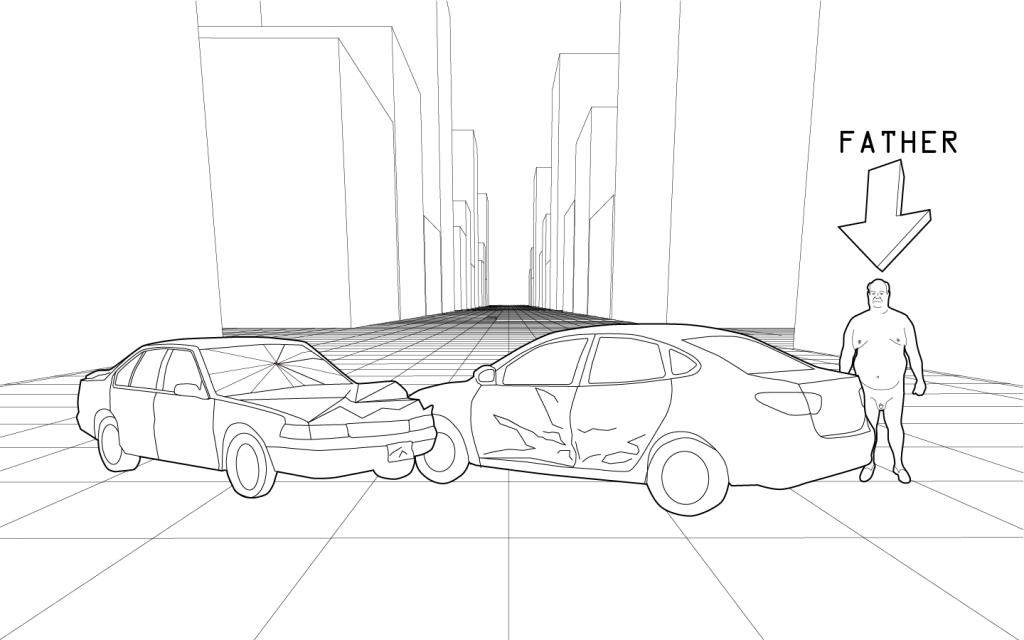

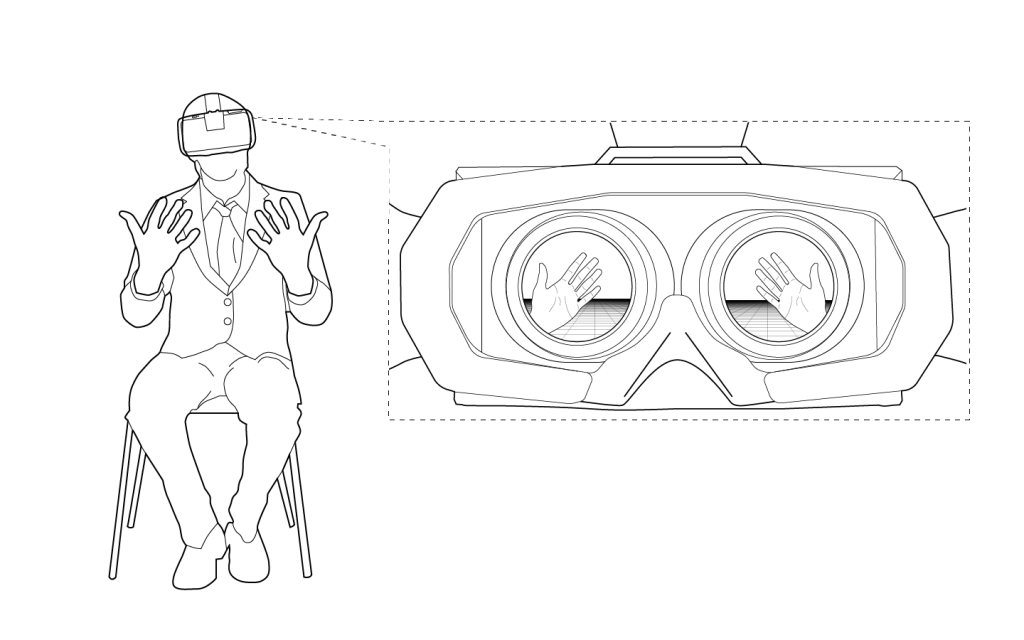

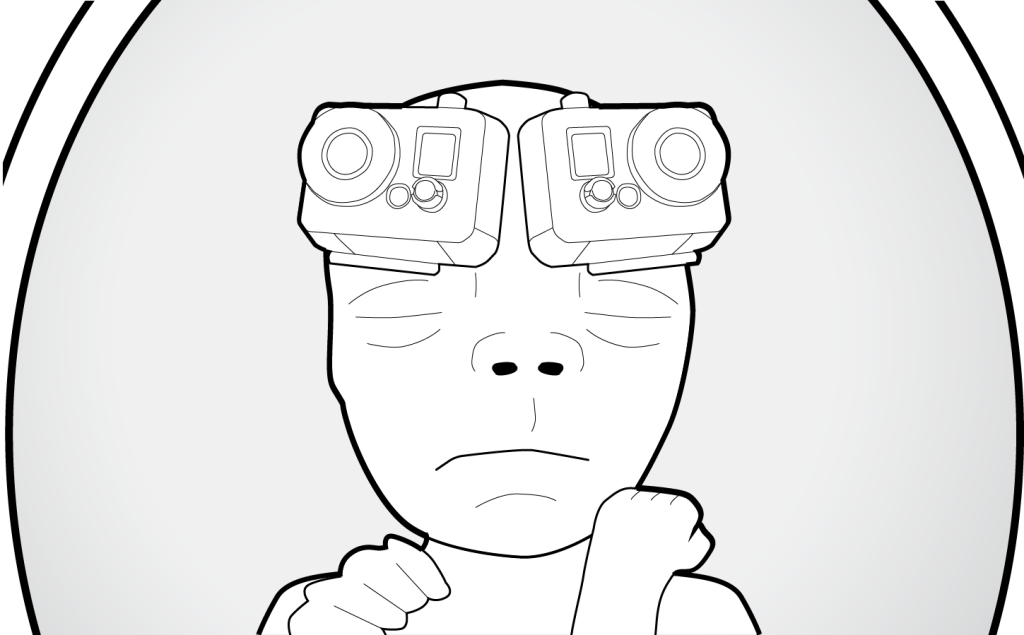

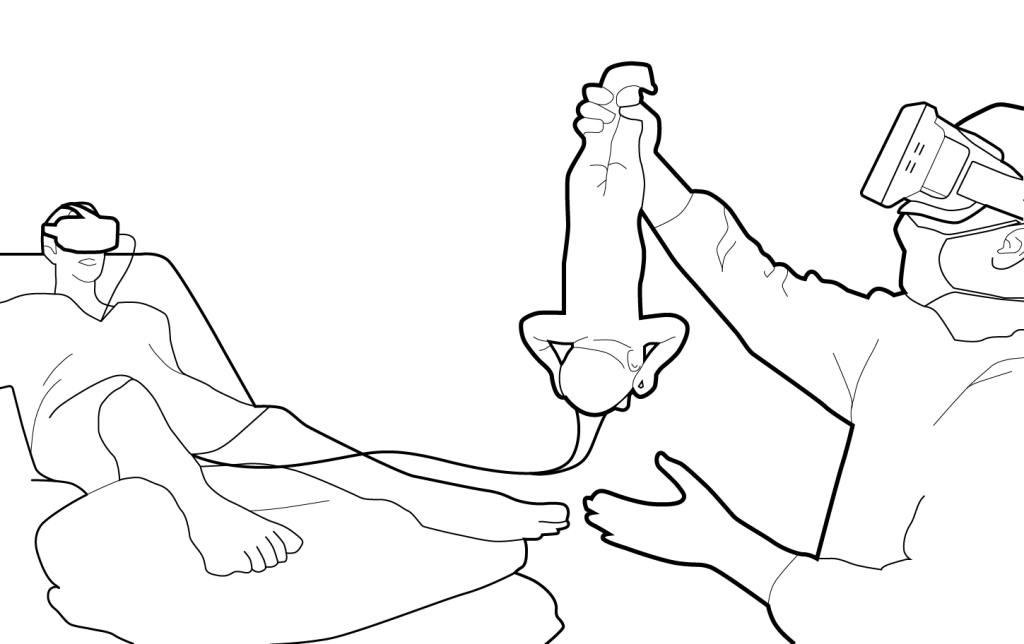

Oculus Oedipus

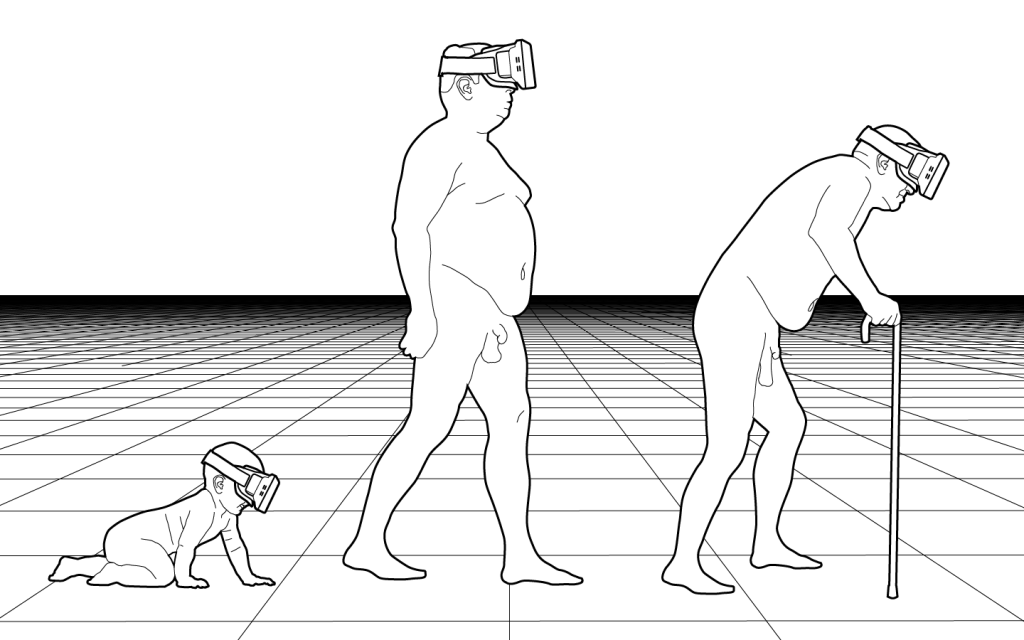

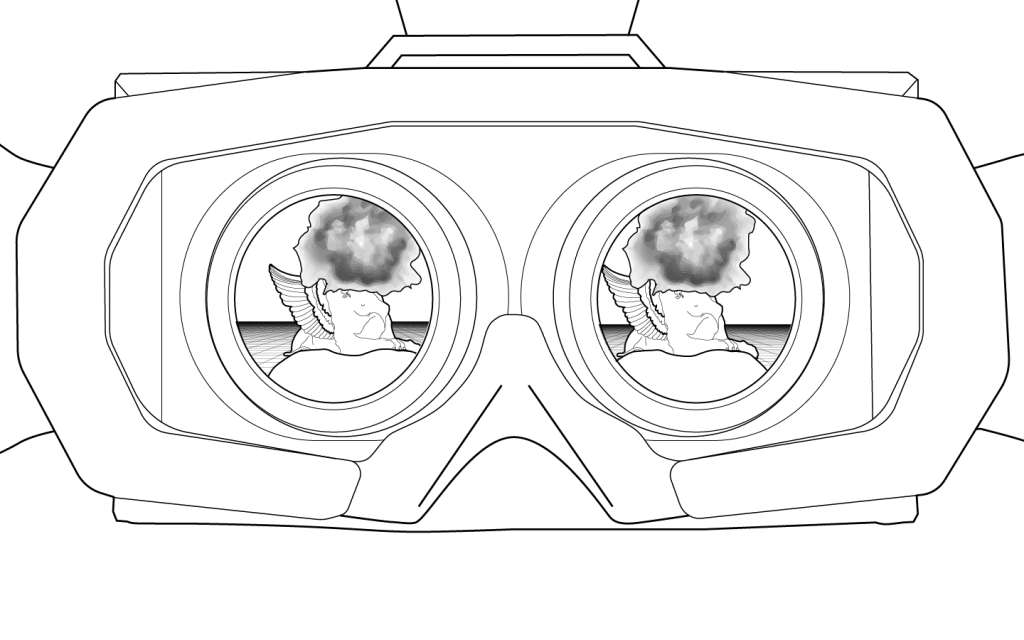

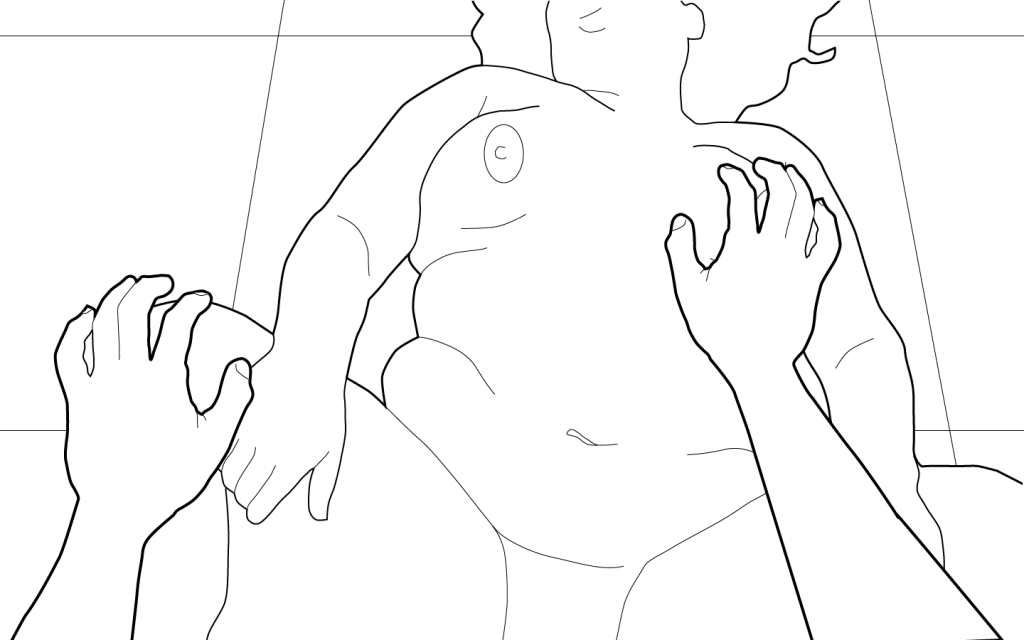

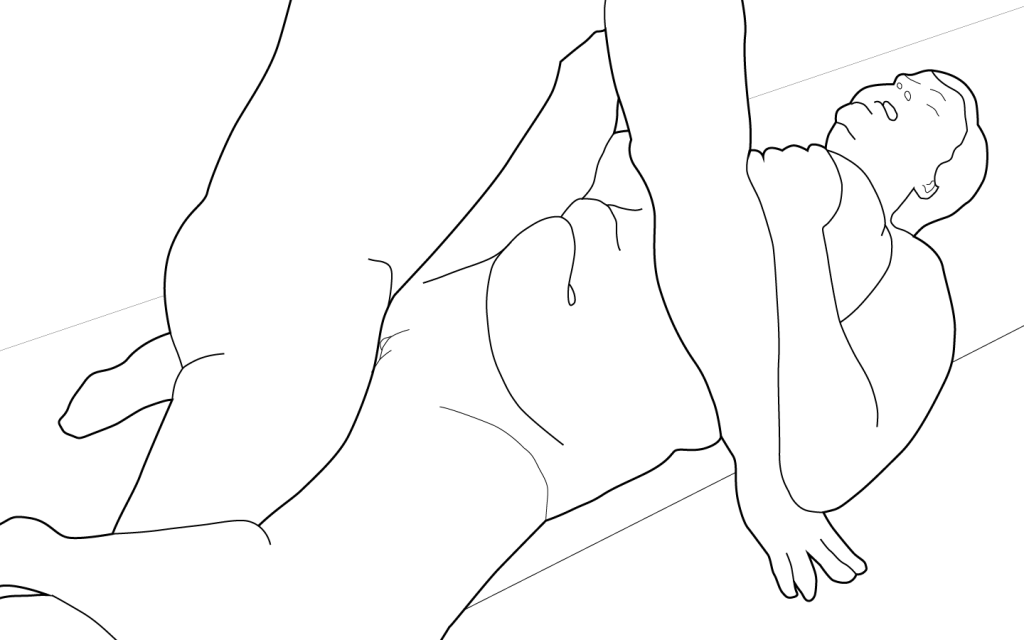

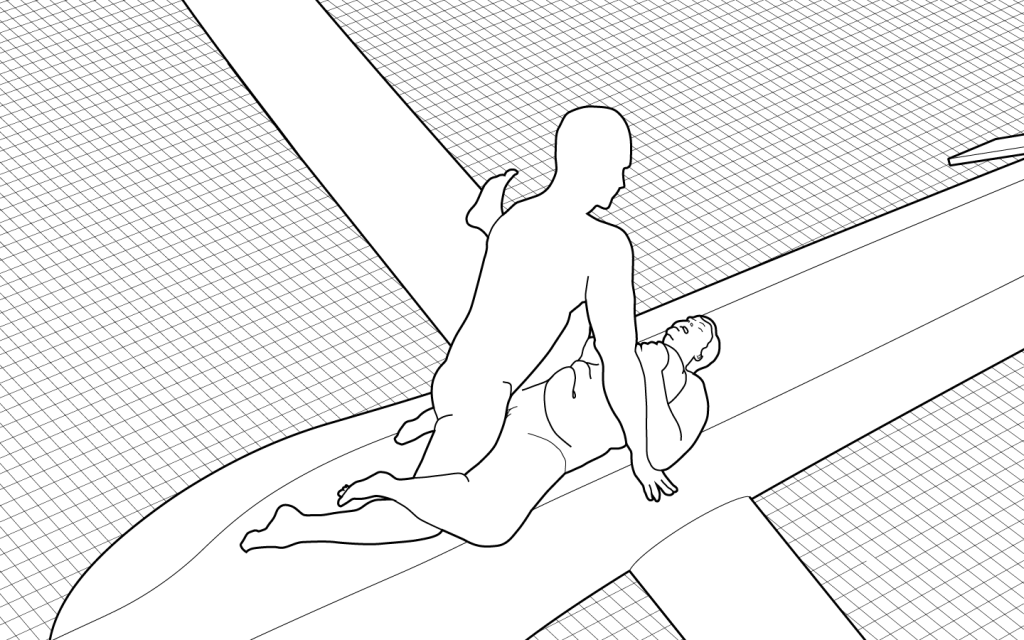

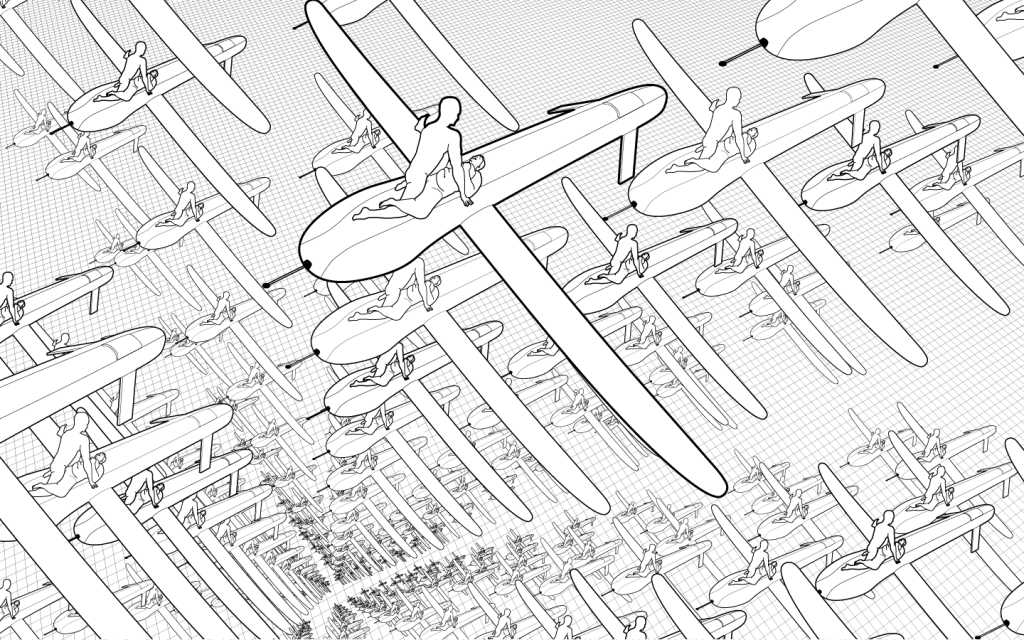

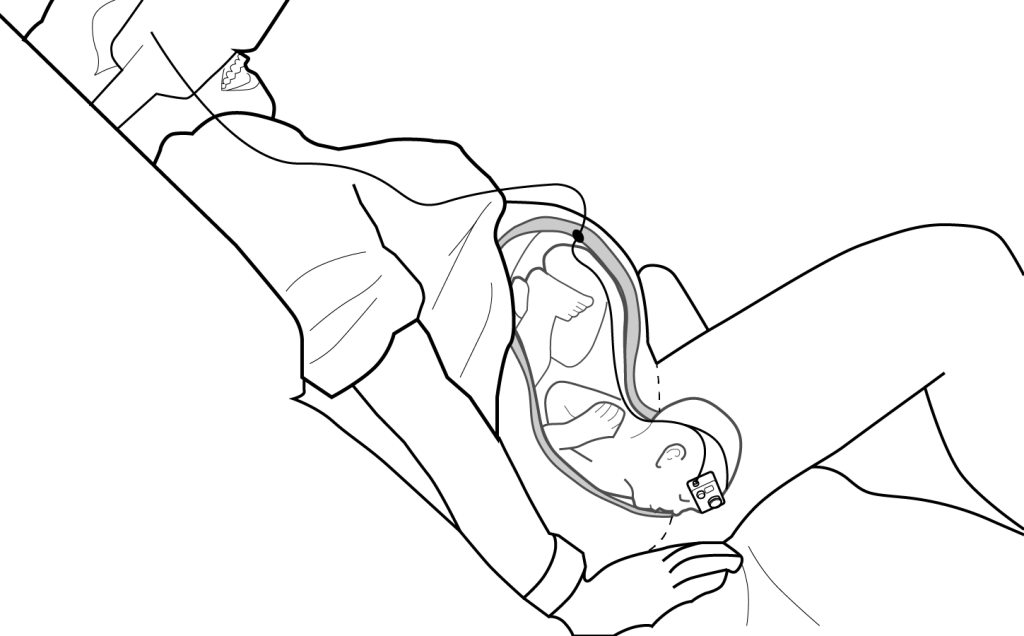

This is part 2 of a series of speculative virtual reality projects. Illustrations by David Tracy. Also appears in The New Inquiry.

Preparation

Stage 1: Father

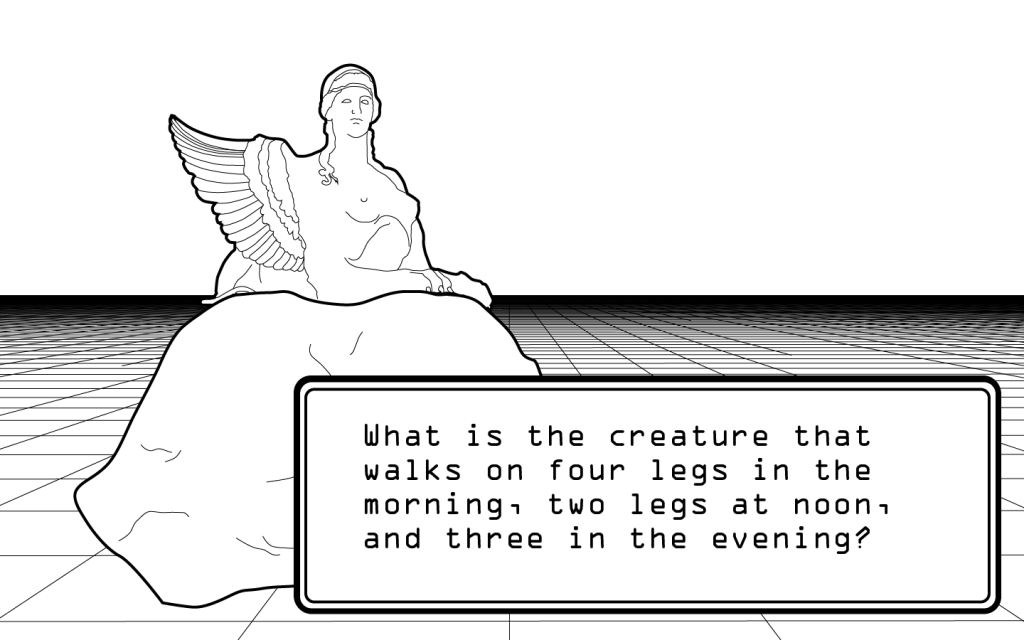

Stage 2: Sphinx

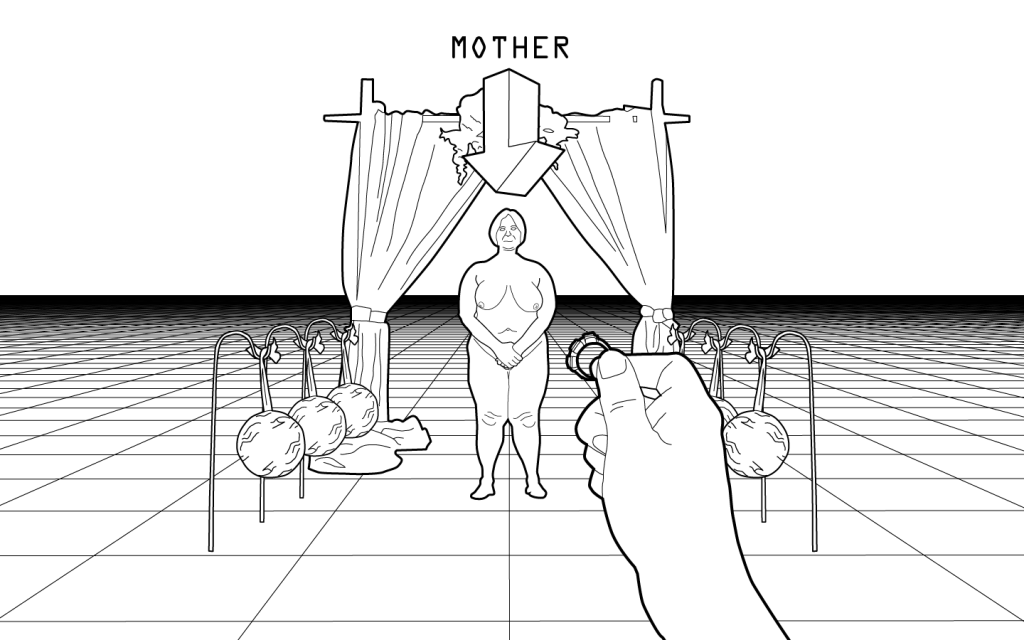

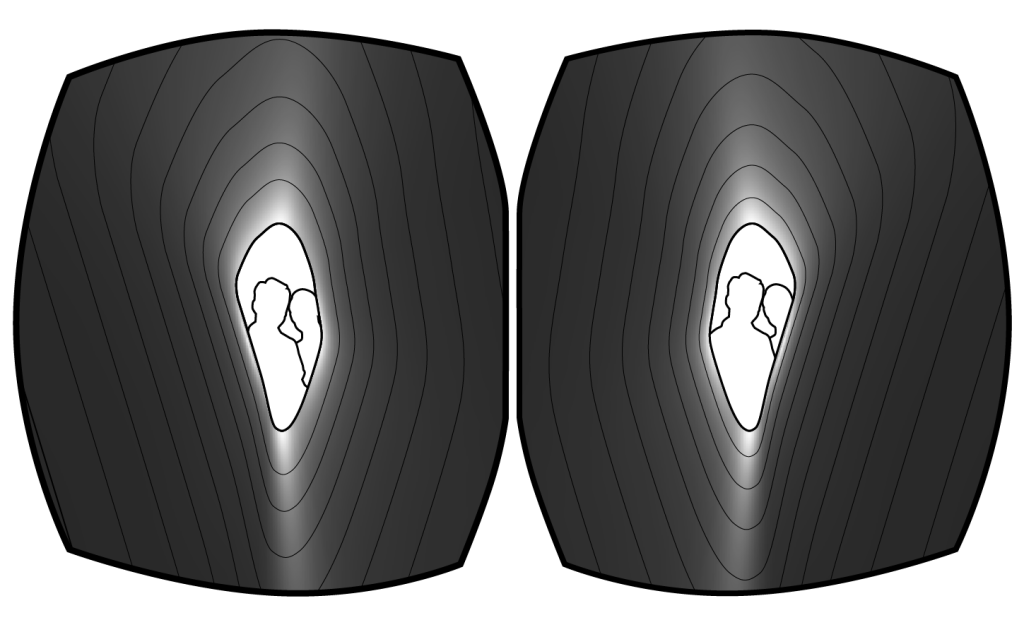

Stage 3: Mother

Postscript

Oculus Birth

This is part 1 of a series of speculative virtual reality projects. Illustrations by David Tracy. Also appears in The New Inquiry.

Case Study

Here is some initial documentation for “Case Study”, a project that I’m working on with Pedro G. C. Oliveira.

“Case Study” is a briefcase that analyzes and produces visualizations of literary/philosophical texts using weaponized natural language processing software originally developed by the military. The parsing software, which finds and tags geopolitical events and world-historical actors in news articles, was originally intended to be a tool to help governments make predictions about global trends and material conflicts. In Case Study, we use that same tool on literary and philosophical texts rather than news articles, producing visualizations that frame literary and philosophical events in the language of geopolitics and the military.